NVIDIA GTC 2026: A Look Back

Attending NVIDIA GTC 2026 was one of the most intense and rewarding weeks of my professional career:

- Getting first place in the NVIDIA GTC 2026 Vibe Hackathon against engineers from Big Tech and Ivy League students

- Getting certified as an NVIDIA Professional in Agentic AI

- Developing a deeper understanding of the latest in AI, graphics, and GPU computing

- Meeting and hearing from some of the most brilliant minds in the industry

A Hackathon Victory

The absolute highlight of the trip was competing in and winning the Vibe Hack Hackathon. We achieved first place! The competition was incredibly fierce, as we were up against top Ivy League students and talented engineers from Big Tech. Even though we were only a duo, we managed to balance trade-offs effectively to win against talented teams with as many as 4 people. As a reward, we (myself and my fellow colleague) were presented with an autographed RTX 5080 by NVIDIA CEO Jensen Huang.

Becoming a Certified NVIDIA Professional

While attending GTC, I decided to test my practical knowledge by taking the in-person exam for the NVIDIA Professional Agentic AI certification. I'm happy to report that I passed and walked away with my certificate. It was rigorous, but taking it at GTC added a great sense of achievement to the experience.

Technical Deep Dives

Beyond the hackathon and exams, I spent most of my time soaking in the technical sessions. Here are the core areas I explored and what I took away:

Agentic AI and Context Management

One of the most prescient topics was how companies are dealing with context management and long-running agentic tasks. We're moving beyond simple chat interfaces into autonomous agents that need to maintain state over extended periods, and learning the varied architectural approaches to this was fascinating.

Reinforcement Learning Paradigms

I also dove deep into reinforcement learning, specifically looking at RLHF (Reinforcement Learning from Human Feedback) alongside group policy RL. Seeing the nuances of how these approaches steer model behavior at scale gave me a lot to think about for future engineering projects. Knowing when to fine tune, when to use the various RL methods was something I had a vague idea about, but now I feel I have a much stronger intuition for it. At a high level, fine tuning is great for teaching a model ground truth happy path information, while RL methods are great for teaching a model how to behave in the real world with edge cases and unexpected inputs.

Graphics, Compression, and DLSS 5

On the graphics front, learning about Gaussian splats and the underlying mechanics of NVIDIA's DLSS 5 was incredible. I learned how integrating AI models natively allows for far more efficient compression and decompression of graphical assets, fundamentally shifting how we think about rendering pipelines.

cuTile: Python over CUDA

As someone who loves building core technologies, learning about cuTile was a major unlock. Using Python as a higher-order abstraction over CUDA programming makes GPU acceleration vastly more approachable without sacrificing low-level control when you need it.

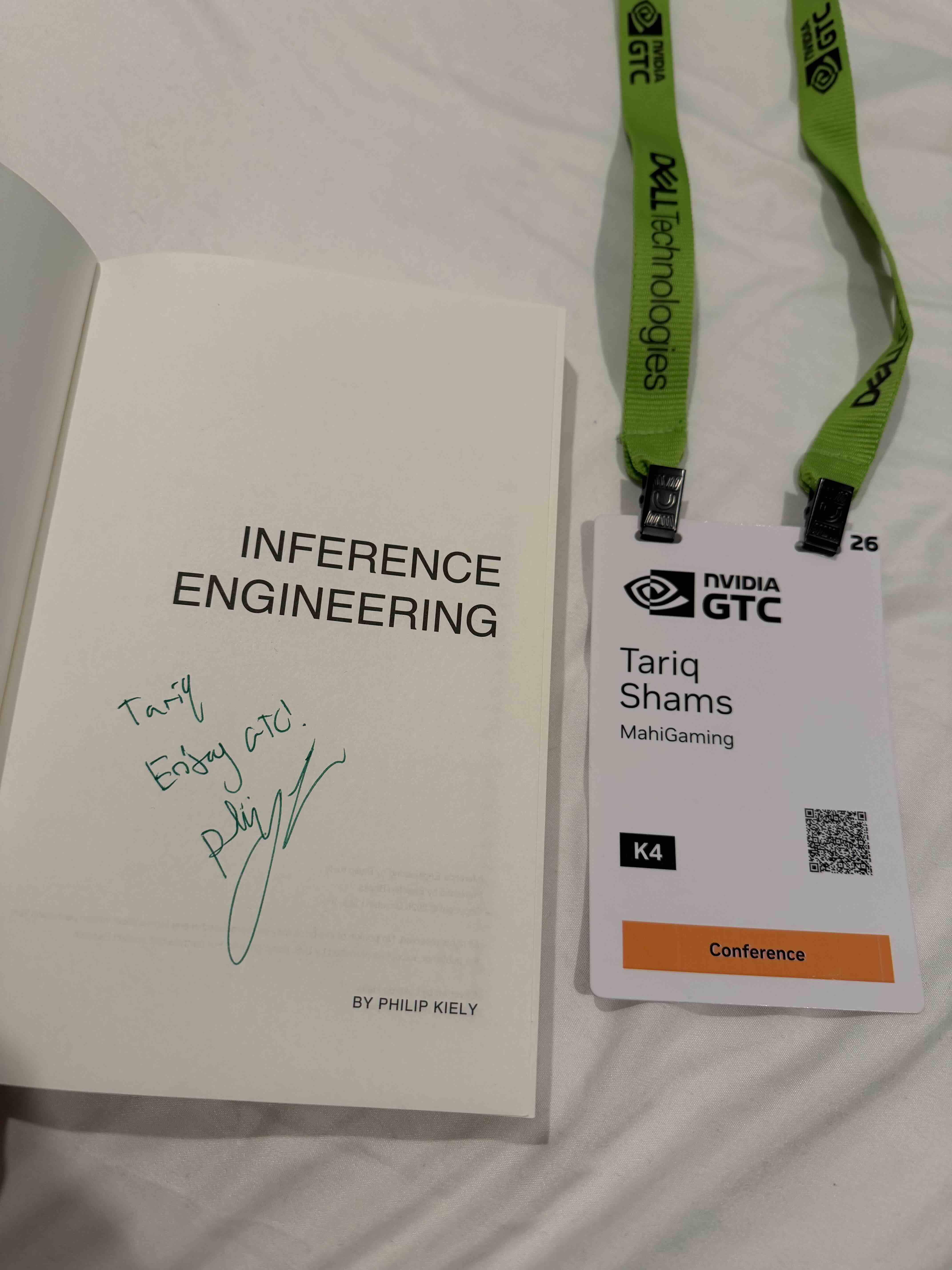

Inference Engineering and LLM Optimization

Finally, I spent a significant amount of time learning about Inference Engineering. I even managed to get a signed copy of the Inference Engineering book directly from the senior inference engineer at Baseten who wrote it! Thanks Philip Kiely!

The focus was on hardcore performance optimizations in LLM inference:

- KV Cache optimization

- Layer merging

- Request batching

Understanding how the NVIDIA tech stack (Dynamo, Triton Inference Server, Nemo etc.) can help handle these optimizations automatically is going to drastically change how I deploy models moving forward.

Overall, GTC 2026 was a massive level-up. I won a hackathon against some of the most talented engineers and students in the world which was surreal, I got a new certification, but most importantly, I left with a much deeper understanding of the systems that will power the next generation of AI.